|

|

|

3.06

12.05.2009 | 3.06 | Auteur : Domisse

|

|

New release available. Thanks to everyone who help to improve this package. Your feedbacks are welcome.

List of improvements :

- Squid reports faster and took less memory using the default precision

- New world map allowing better cities location

- Nicer page reports with poplayer to display pages stats without the need to open each directories

- Sitemap.xml available to help spider to scan your website

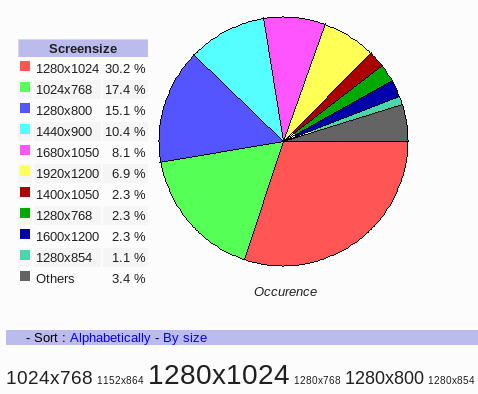

- Pie charts and cloud tags available.

- Can read apahe rotation logfiles with increasing number

- Improved IIS installer (choose webserver and build config file on the fly).

- Many bug fixes ...

Ideas for the next release :

- Support for load-balancing servers

- Adding filering in table

- Multi-processor support

- Search tools

- New homepage

- Incremental real-time to speed up

- WMI for IIS 7.0 installer + support for UTF-8 W3C logfiles

|

|

|

|

|

|

3.056 - Mailing list

05.05.2009 | 3.056 - Mailing list | Auteur : Domisse

|

|

Last developement release before the next stable one. W3Perl is now listed among the top 10 best free tools. Most users are

from the U.S., Germany and North of Europe, sadly very few from my own country : France :(

I've opened a mailing list so I will be able to help you more easily. This is a moderated mailing list so

you need to subscribe first.

W3Perl is being listed in the french Plume project (Promoting economicaL, Useful and Maintained softwarE for the Further Education And THE Research communities).

|

|

|

|

|

|

3.055 - New cities map

28.04.2009 | 3.055 | Auteur : Domisse

|

|

The french provider 1&1 is using apache rotation logfile but the index number is increasing rather than decreasing.

Biggest index is the more recent logfile ! So the scripts have been updated in order to cope with this way to rotate logfiles.

New cities maps are available. Previous maps were Robinson projected ones, I use now Equi-rectangular projection which is more

easy to work with. I've downloaded this SVG world map

from wikimedia and used Inkscape to zoom into.

|

|

|

|

|

|

Website moving

20.04.2009 | Website moving | Auteur : Domisse

|

|

I've bought a new domain to host my private page. So w3perl.com will be focused on the package with no others contents.

It will allow to move the /softs/ directory down to the root level. The drawback is there will be less accesses and the demo

will only display stats about the package itself. I'm using redirect apache directive so requests will be moved to the new domain

domisse.fr and robot can follow the links thanks to 301 status code.

More information how to use redirect :

- Redirection Web en HTTP et HTML

- HTTP redirection handled by personalised 404 error pages

|

|

|

|

|

|

3.053

15.04.2009 | 3.053 | Auteur : Domisse

|

|

New pie charts have been added in the filetype / cities and directories reports. Cloud tags have been introduced in some reports.

First sponsor for w3perl ! The webhostingsearch website offers some help, they now have

a link to the w3perl's homepage to their own website.

Some improvements :

- Chrome was hidden in Safari browser

- IP hostname were not resolved when using resolv_users.csv

- Wrong percentage with countries traffic in some specific case.

- Screensize ratio available

|

|

|

|

|

|

Survey

08.04.2009 | Survey | Auteur : Domisse

|

|

Want to improve W3Perl ? Your feedbacks are welcome. A small survey is available. Feel free to send

any comment, it will help !

|

|

|

|

|

|

Faster squid stats

30.03.2009 | Faster Squid stats | Auteur : Domisse

|

|

In order to improve speed and memory for Squid users, some reports have been switched to the highest level of precision (so you

can still get them if needed). Script's argument, Top 10 files for each filetype are not anymore available in the default

level. Also destination directories are restricted to the first depth level.

So I recommend to every Squid users to use the latest developement package.

Cloudtag is available in the Keyword report. Also the list of entry points for each keyword is displayed so you can check where users came in when we put a kyword in a search engine.

|

|

|

|

|

|

Sitemaps

24.03.2009 | Sitemaps | Auteur : Domisse

|

|

I was looking for a tool to build web sitemap .... but unfortunately, I was not able to find one !

Most CMS have their own but I wanted one for my own website.

Google can parse your website and produce a XML file following the Sitemaps protocol.

Each line have four fields : Web file location, date of last modification, update frequence and priority (for robots).

As I have already a script which parse a whole website, it was quite easy to build my own XML sitemaps.

Last modification date is extracted from file properties (mtime), update is the computed from the number of days since last modified (if the

file is few days old, it will be flagged as daily, older file will be weekly, monthly, yearly).

Priority is computed from the depth location and date of last acccess (atime). A file deep in your structure will have a lower priority (so homepage

have the highest rank) and file which have been often requested will get a higher priority. Both share 50%/50% weight.

Once this file available, you can include it in your robots.txt file as most crawler is able to use it to index your website. Of course,

you can edit this file in order to get a better match between priority and remove some entries but keep in mind, the file should not

exceed 10 Megabytes.

User-agent: *

Sitemap: http://www.mydomain.com/w3stats/data/sitemap.xml

Sadly, once the XML file produced, I was not able to find any free tools to convert this file into a nice graphic or table. So I decided

to use once again my script to improve the document tree. Few days later and bug fixes, I have a first draft.

|

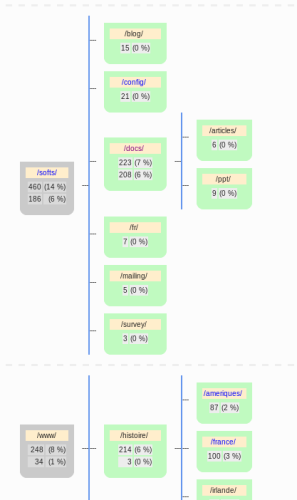

In the page report, the map will display the number of page read for each directory.

The first number is the number of html pages found in the whole directory (including sub-directories).

The second number is the number of html pages found only in the directory.

In the document tree, the whole website will be displayed.

There are still some improvements to be made. The vertical line should be shorter.

|

Few website about Sitemaps :

- http://www.auditmypc.com/site-maps.asp

- http://www.google.com/support/webmasters/bin/answer.py?answer=40318&cbid=1tb5m2p5h7e8i&src=cb&lev=answer

- http://www.outils-referencement.com/outils/sitemaps/generateur

- http://gadelkareem.com/2007/12/10/sitemap-creator-02a-create-sitemaps-09-valid-for-google-yahoo-and-msn-sitemaps/

- http://code.google.com/p/googlesitemapgenerator/downloads/list

- http://freshmeat.net/search?q=sitemaps&submit=Search

|

|

|

|

|

|

3.051

15.03.2009 | 3.051 | Auteur : Domisse

|

|

First bug report from 3.05.

- Bzip2.exe and wget/gzip DLL now included in the windows package

- US language was not set

- Yearly stats crash for heavy website as Terabyte was not set.

- Table sort now descending rather than ascending

- Adding max_item_display to avoid too large html file to be loaded (default is 10 000 entries)

- Incremental stats stop if launched more than once a day

- Filetype are now better filtered to avoid a huge list for proxy report.

- View stats on web admin now works when running a server on a dedicated port.

- Bypassing empty logfiles.

Planning for 3.051 :

- Progress bar

- Pie chart for browser/filetype

- Cloud Tag

- Max_item_page to add

- Filtering table

- Survey

- IIS WMI

- Improving filetype and script memory usage

|